PhD in Computer Science, Artificial Intelligence concentration • September 2021

M.S. in Computer Science • June 2017

B.S. in Computer Engineering, Magna Cum Laude • May 2015

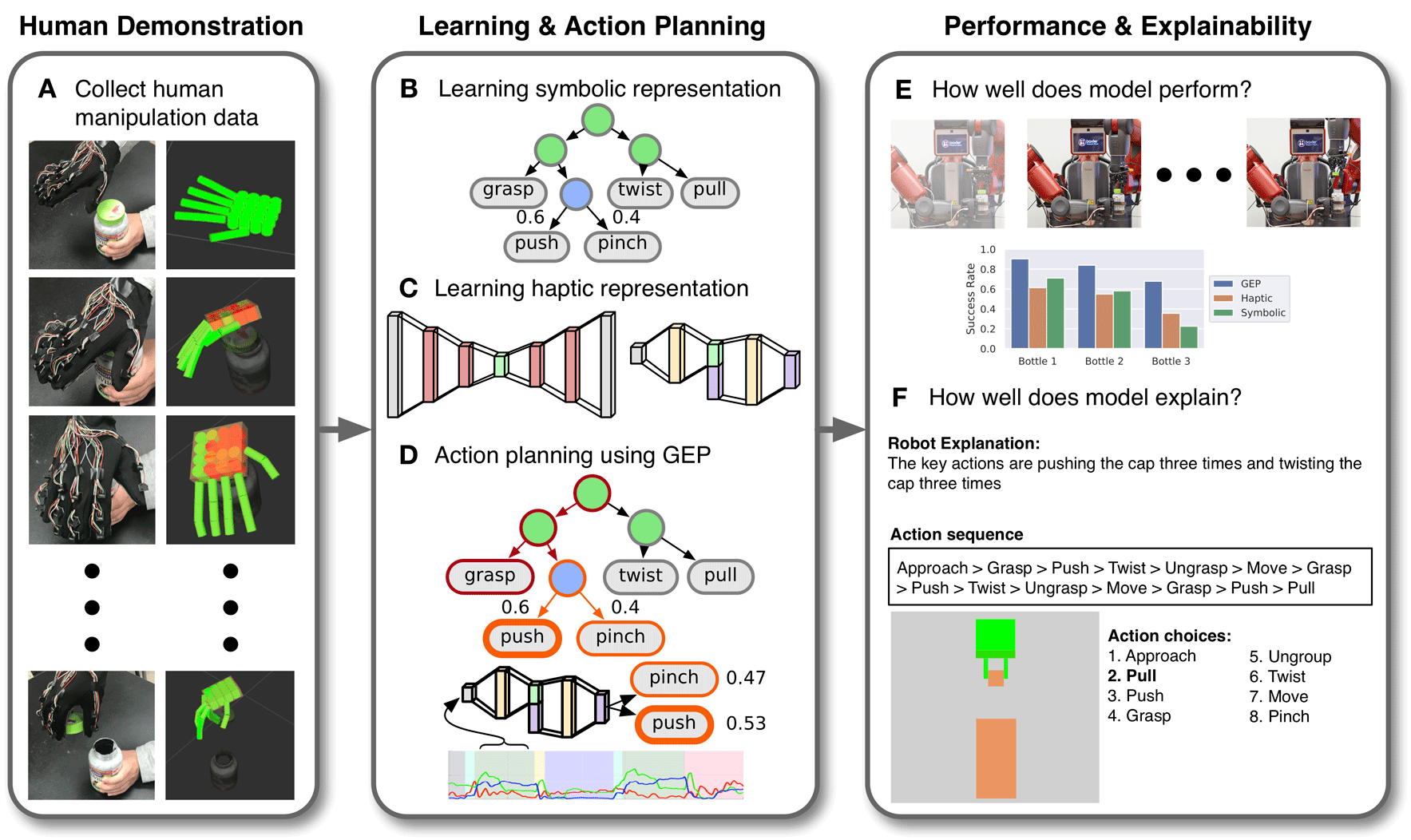

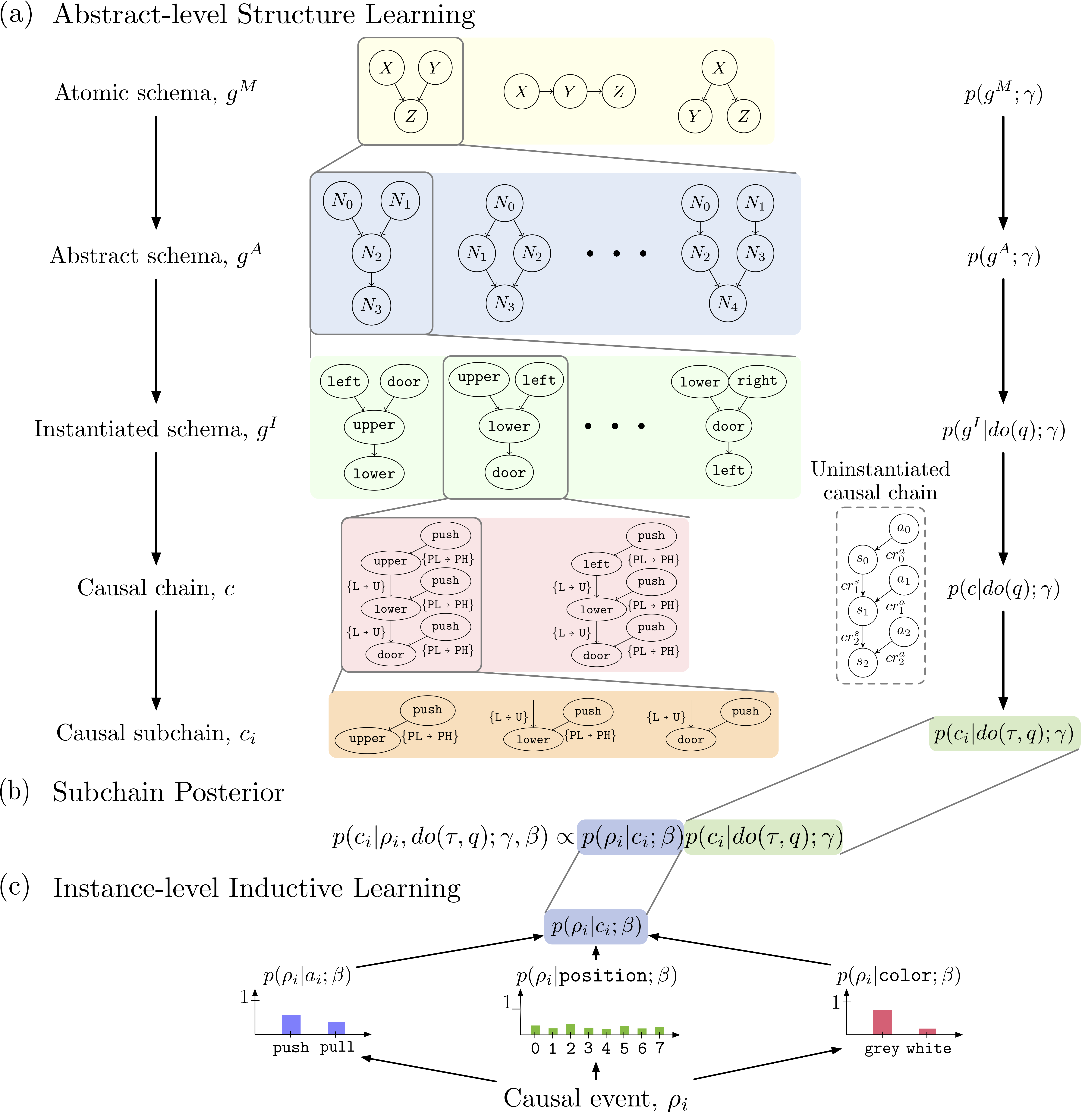

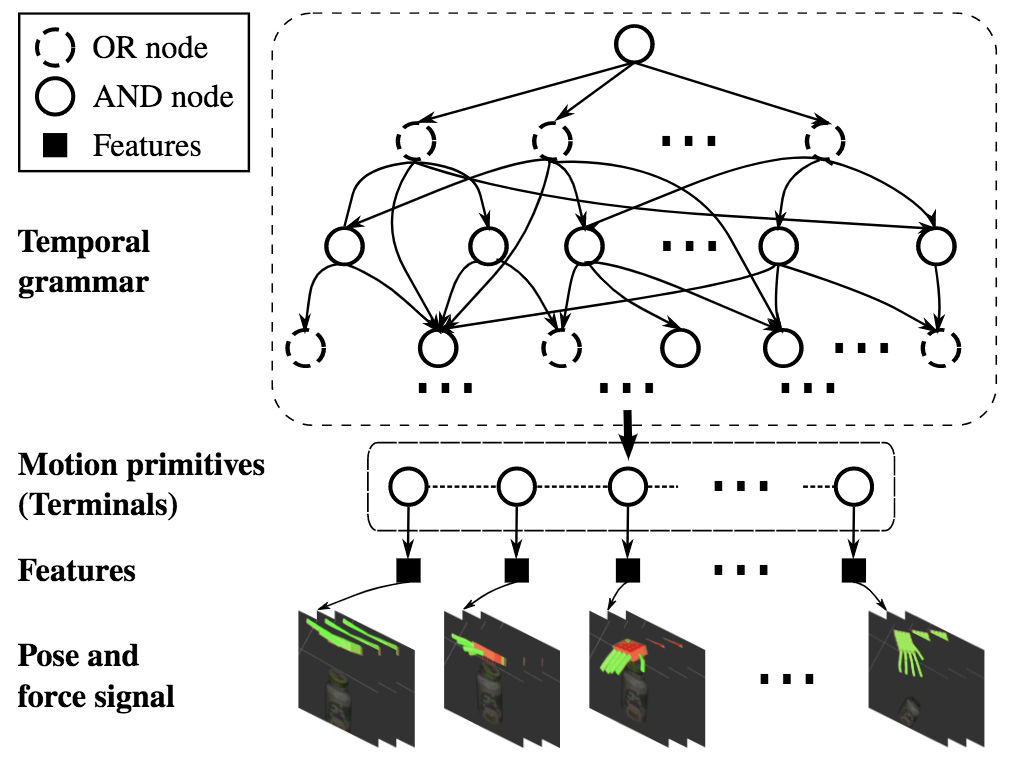

Humans build generalizable and explainable representations of their environment through interaction, observation, imitation, intervention, and language. The following research produced from a collaborative, team-based lab explores how artificial agents can use these five concepts to learn robust and transferable representations of tasks and environments.

Center for Vision, Cognition, Learning, and Autonomy, UCLA• Mar 2018 - Present

Center for Vision, Cognition, Learning, and Autonomy, UCLA• Feb 2018 - Dec 2019

Center for Vision, Cognition, Learning, and Autonomy, UCLA• Feb 2017 - Feb 2020

Center for Vision, Cognition, Learning, and Autonomy, UCLA• December 2015 - Feb 2017

Wright Patterson Air Force Base, University of Dayton• January 2014 - September 2015

University of Dayton Senior Design Project• August 2014 - May 2015

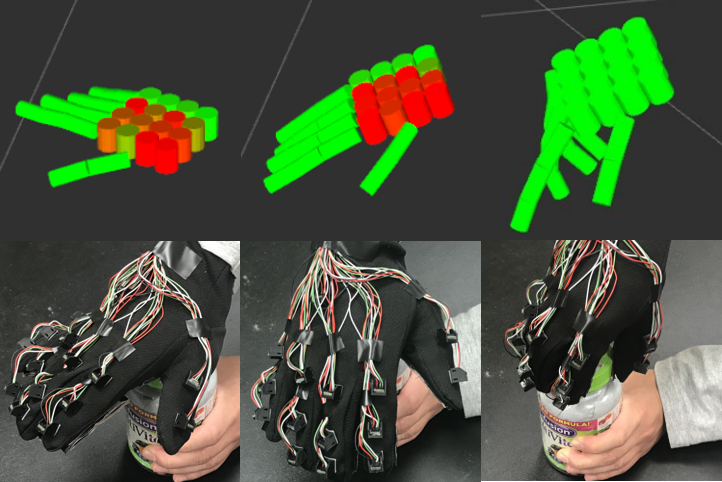

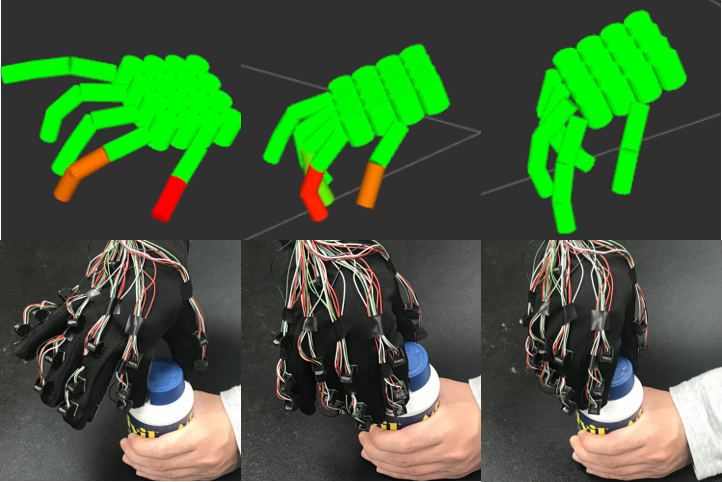

Science Robotics, Volume 7, Issue 68, 2022

UCLA Dissertation

Engineering 2020

Science Robotics, Volume 4, Issue 37, 2019

Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), 2020

41st Annual Meeting of the Cognitive Science Society (CogSci), 2019

IEEE Transactions on Parallel and Distributed Systems (TDPS), March 2019

40th Annual Meeting of the Cognitive Science Society (CogSci), 2018

International Conference on Robotics and Automation (IRCA), 2018

International Conference on Intelligent Robots and Systems (IROS), 2017

International Conference on Intelligent Robots and Systems (IROS), 2017

Software Engineering, Artificial Intelligence, Networking and Parallel/Distributed Computing (SNPD), 2015

Aerospace and Electronics Conference (NAECON), 2015.

Center for AI and Robot Autonomy (CARA)• Mar 2021 - Present

Santa Monica College• June 2016 - Present

Center for AI and Robot Autonomy (CARA)• June 2018 - Mar 2020

Computer Science Department, UCLA• September 2015 - June 2016

Electric & Computer Engineering Department, University of Dayton• January 2015 - May 2015

Garmin International• May 2013 - August 2013

School of Engineering, University of Dayton• September 2012 - May 2015

Cristo Rey Kansas City• May 2011 - August 2012

If you have any questions about me, my research interests, or my work, please reach out. Interesting thoughts from interesting people are always welcome. mark@mjedmonds.com